Five years ago a typical enterprise data hall ran 4–8 kW per rack, the cooling architecture was raised-floor CRAC with hot/cold aisles, and the operator's biggest thermal worry was a single hotspot above a top-of-rack switch. The cooling design tolerated mistakes. There was margin everywhere.

That margin is gone.

A single NVIDIA GB200 NVL72 cabinet draws roughly 120 kW at full load. An H100-dense rack runs 30–60 kW. Even mid-range AI inference racks now hit 20–30 kW. The old playbooks — built around a 5 kW/rack assumption with 20% growth headroom — break in environments where average density triples in eighteen months and one tenant moves at twice the rate of the rest.

What used to be an MEP problem at handover is now an operational discipline. Thermal management is no longer something you commission and forget. It is something you instrument, model, and re-tune as the load profile changes underneath you.

The cost of getting it wrong

Three failure modes dominate. None of them are dramatic; they are slow, expensive, and largely invisible until they aren't.

Stranded capacity. A hall sized for 5 MW of IT load typically supports 3.2–3.8 MW once the cooling architecture meets each rack's intake temperature spec. Operators pay for the full 5 MW of mechanical and electrical infrastructure but bill on what fits — and what fits is whatever the worst-aisle cooling allows.

PUE drift. A facility commissioned at PUE 1.35 will drift to 1.45–1.55 over five years if no one re-tunes the cooling against the actual load. Energy is the largest operating cost in the building, and a 0.10 drift on a 5 MW IT load is roughly 4.4 GWh/year extra at the meter. At Indian commercial tariffs that is several crores a year going into the chiller plant for no commercial return.

Quiet outages. Inlet temperature breaches rarely cause hard failures. They cause throttled CPUs, retried jobs, voided warranties on storage shelves, and a steady erosion of SLA margin. The data centre keeps running — just less of what you paid for.

The unifying point: all three failure modes are predictable. The information needed to predict them sits in CFD models, telemetry, and rack-level airflow data. Most operators have two of the three and don't combine them.

The cooling architectures, briefly

The architecture sets the ceiling. Here is the rough envelope each one supports:

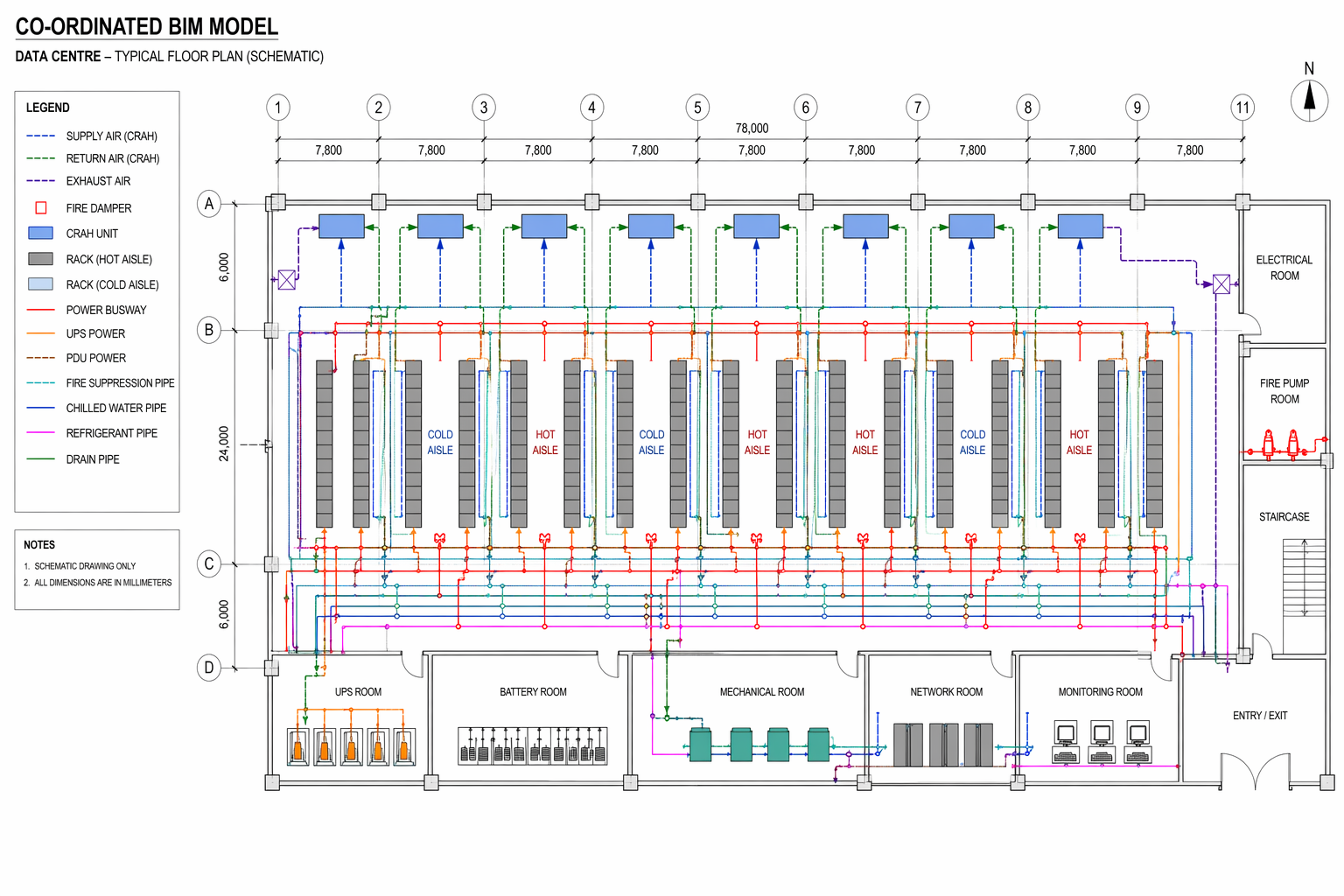

Raised-floor CRAH/CRAC with hot/cold aisle. The legacy default. Comfortable to about 8 kW/rack. Above that, recirculation around the top of the rack starts dominating intake temperatures and cooling-air bypass climbs above 30%.

Cold or hot aisle containment. Doubles the practical density to ~15–18 kW/rack. Gives back roughly 8–14% on PUE for most halls because the cooling-air supply temperature can rise without breaching intake specs. Cheap, well-understood, and chronically under-implemented.

In-row and rear-door heat exchangers. Bring liquid close to the rack. Comfortable to 30–40 kW/rack with rear-door, more with in-row. Useful where the floor plan is fixed but density needs to climb.

Direct-to-chip liquid cooling. Required above ~50 kW/rack. Facility water at 30–45 °C feeds CDUs (Coolant Distribution Units — which are plate heat exchangers; see our Heat Exchanger Configurator for sizing) which feed cold plates on each socket. Air handles the residual ~20% load.

Immersion. Single-phase for most enterprise; two-phase mostly in HPC. Justified where chip TDPs and density justify the operational complexity.

The mistake operators make is choosing the architecture and then assuming the thermal performance follows. It doesn't. Two halls with identical hardware and identical containment can show 5 °C of intake temperature spread across racks, depending on plenum design, perforated tile layout, and CRAH placement. The architecture sets the envelope; the implementation determines where in that envelope you actually land.

Where CFD fits — building the pre-digital twin

The proposition is straightforward. Before changes are made on the floor, build a validated CFD model that reproduces the current state, then run the change in the model and look at the result. Get the change wrong on a screen, not in the hall.

In practice this means a rack-level model of the data hall: every rack, every CRAH, the raised floor plenum, the ceiling return, perforated tile placement, and load distribution per rack. The model is calibrated against measured intake temperatures at a sample of racks — usually 30–50 measurement points across a typical hall — until predictions agree to within ±1.5 °C. That calibration is the work that separates a defensible CFD model from a colour rendering.

Once calibrated, the model becomes the pre-digital twin of the hall. Every change — adding a rack row, raising supply temperature by 1 °C, swapping an end-of-row CRAH for an in-row unit — is run on the model first. Three things come out: the new intake temperature distribution, the new bypass airflow figure, and a delta on PUE. The change either survives the model or it doesn't.

ROM models for ongoing operations

A CFD model takes hours to run. Operators don't have hours when a rack request lands and a tenant wants a commitment in fifteen minutes.

The fix is a Reduced-Order Model (ROM) — a surrogate trained on hundreds of CFD runs that returns rack-level intake temperatures across thousands of rack and cooling configurations in seconds. The ROM lives in the same dashboard the capacity team already uses. They drag a new rack into a row, the ROM returns the temperature delta, and they either approve or escalate.

Two practical points. First, the ROM has to be retrained as the hall changes — typically once a quarter, or after any structural change to the cooling architecture. Second, the ROM is not a replacement for CFD; it is CFD's operational interface. The full model still runs for major changes; the ROM handles the routine ones.

Operators that adopt this workflow stop running their data halls on conservative envelopes. They run them at the actual envelope, with confidence that the next request won't break anything.

Hotspot prediction in practice

Hotspots are rarely caused by a single failed component. They are caused by interactions — a slightly under-perforated tile, plus a slightly over-loaded rack, plus a CRAH that has been on bypass since the last commissioning, plus three years of cumulative changes nobody updated the documentation for.

CFD finds these because it sees everything at once. Telemetry alone won't — telemetry only sees the racks that have inlet sensors, and most halls don't sensor every rack. The CFD model fills in the gaps and identifies the racks most likely to breach next, ranked by predicted intake temperature under projected load.

Three patterns repeat across projects:

- Top-of-rack recirculation — hot air leaks back over the top of the rack, raising intake temperature on the upper half. Fix: blanking panels and aisle containment.

- End-of-row starvation — the racks at the end of a row see lower supply pressure and warmer intake. Fix: tile rebalancing or end-of-row CRAH addition.

- Cumulative bypass — perforated tiles installed for racks that have since been removed continue to dump cold air into the return path. Fix: tile audit, retire the unused tiles.

None of these are discoveries. All of them are easy to miss without a model that ties supply, plenum, and rack-level airflow together.

A six-step PUE reduction playbook

In rough order of return on effort:

- Audit and contain. Cold or hot aisle containment, blanking panels in every rack, brush grommets on every cable cutout. Cheap, mechanical, and worth 0.05–0.10 on PUE.

- Raise the supply temperature. Most halls run cold-aisle supply at 18 °C when the IT load tolerates 22–24 °C (ASHRAE A1 recommended envelope is 18–27 °C). Each 1 °C of supply temperature increase recovers roughly 1.5–2% of mechanical energy.

- Tune the perforated tile layout. Match tile placement to rack power density. Audit quarterly, not annually.

- Retire over-provisioned CRAH units. A hall with eight CRAHs at 50% utilisation runs less efficiently than the same hall with five CRAHs at 80%. CFD identifies which units to switch off without breaching intake specs.

- Move set points seasonally. Tropical and temperate halls can run different supply temperature curves through the year. Most don't.

- Plan the next change against the model. Stop adding load against the same conservative envelope; use the pre-digital twin to find where the actual capacity is.

Operators that work through this list typically see 5–15% PUE improvement before any capital expenditure on cooling hardware. For a 5 MW IT load that is the kind of saving that pays for the modelling work several times over, in the first year.

Liquid cooling, CDUs, and what comes next

Direct-to-chip liquid cooling is no longer optional above 50 kW/rack, and it changes the thermal management problem in two ways.

First, the CDU is the new critical asset. Every CDU is a plate heat exchanger sized against a specific facility water temperature, secondary loop temperature, and rack-side ΔT. Get the sizing wrong and either the chip temperatures climb or the secondary loop pumps work harder than they need to. We use the Numerix Heat Exchanger Configurator for CDU sizing because the underlying physics — NTU-ε with Martin/Kumar correlations — is the same as for a process plate heat exchanger.

Second, the cooling problem splits in two. Liquid handles the high-density processors; air still handles networking, storage, PSUs, and the residual chip load. The air-side CFD model still matters — it just covers a smaller share of the total. Halls being designed today should model both loops together: facility water, CDU, secondary loop, cold plate, and the air-side residual. The model becomes the design tool, not just the post-build diagnostic.

How to choose a data centre CFD or BIM partner

Buyers asking us this question are usually evaluating three or four firms in parallel. The best filter we can suggest is not the one that flatters us — it's the one that exposes the practical questions every data centre CFD company and data centre BIM company should be willing to answer plainly.

The following six checks separate firms that have actually shipped data hall work from those that have done one project and put it on the website.

- Calibration tolerance. Ask what intake-temperature accuracy the firm commits to (±1.5 °C is the bar) and how many measurement points they take. If they answer in resolution-of-mesh terms instead of measurement-validation terms, they are selling renderings.

- ROM workflow. Can they hand you a ROM your capacity team can run themselves? Or is every what-if a billable callback? The answer reveals whether they treat the model as an artefact you own or a service you rent.

- BIM-to-CFD continuity. Data centre projects need both. A firm that does only one ends up handing off mid-project — the rack layout in BIM doesn't match the load distribution in CFD, the model is rebuilt twice, and small errors compound. Ask whether the same team owns the BIM model and the CFD model. Ours does.

- Liquid-cooling fluency. Above 50 kW/rack the conversation moves to CDUs, secondary loops, and warm-water facility design. A data centre CFD company that can't size a CDU is fine for legacy halls but will slow you down on AI builds.

- References that match your scale. A firm with hyperscale-only references is wrong for a 2 MW colocation; a firm with only enterprise references is wrong for a 50 MW campus. Ask for projects in your size bracket.

- What they say no to. The honest test. Firms that claim to do everything for everyone usually do nothing well. We don't take projects that need wet mechanical design, structural retrofit drawings, or ongoing site supervision — that's not our practice. The exclusions are usually more telling than the inclusions.

If a vendor passes the six checks and the chemistry works, the rest is commercial.

Why Numerix for data centre engineering work

For the visitors who landed here while shortlisting data centre CFD companies or data centre BIM companies, the short version of who we are:

- Based in Bengaluru, India. Delivering globally — data centre projects across India, the Middle East, Southeast Asia, Europe, and the Americas.

- Sixteen years of CFD and thermal practice. Defence launch infrastructure, food processing, water technology, oil & gas, and data centres. Data hall work draws on the same calibrated-model discipline.

- Both sides of the deliverable. The CFD model and the Revit BIM model live with the same team — rack layouts, MEPF coordination, COBie-ready asset data, and the thermal model behind them, coordinated end-to-end.

- ROM-first operational handover. Every CFD engagement ships with a ROM the operator's capacity team can run independently. The model is yours, not ours.

- Liquid cooling and CDU sizing in-house. The Numerix Heat Exchanger Configurator is a productised version of the CDU sizing work we've been doing for years — same NTU-ε / Martin / Kumar physics, packaged as software.

- What we don't do: wet mechanical drawings, structural retrofit, ongoing site supervision, hardware sales. We model, coordinate, and hand over. The construction stays with your contractor.

If that's the shape of partner you're looking for, see our Data Centre Thermal Optimisation page or book a 30-minute call. We'll tell you in the first conversation whether we're the right fit for the project — and if we're not, we'll usually know who is.

The model is the artefact

If there is a single point worth taking from the past five years of data centre thermal work, it is this: the validated CFD model of the hall is the most valuable operational artefact an operator can own.

It outlives any individual engineer's familiarity with the building. It makes the cost of every proposed change measurable before the change happens. It catches the slow drift that telemetry won't.

Build it once, calibrate it properly, retrain the ROM as the hall changes, and use it as the canonical reference for every capacity, cooling, or expansion decision. The hardware in the hall changes every twelve months. The model is the thing that doesn't.

If you are running a data hall and considering a CFD-led thermal review, our team builds these models as standalone projects or as ongoing operational engagements. See our Data Centre Thermal Optimisation page or book a call.